What Is Risk Based Vulnerability Management?

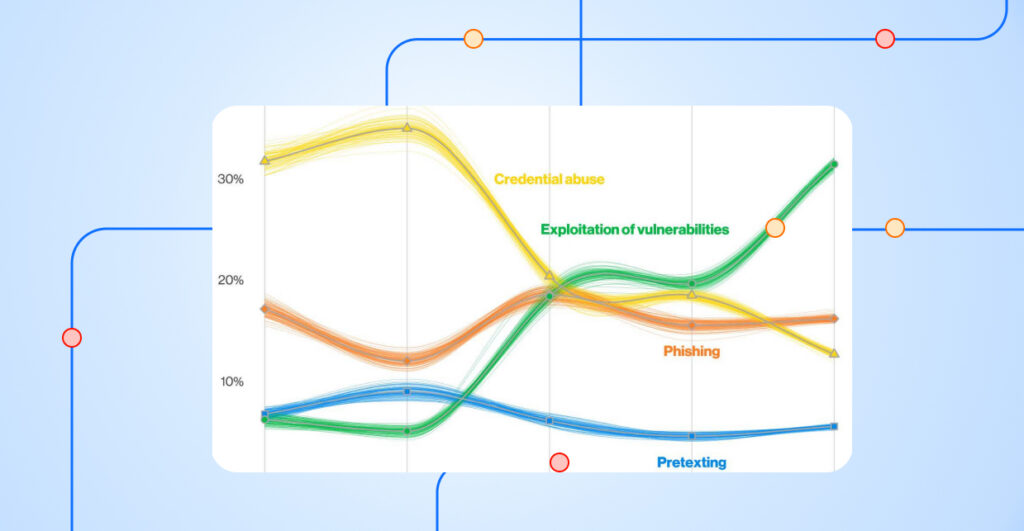

Risk-based vulnerability management (RBVM) is an approach that focuses on prioritizing vulnerability remediation based on risk. RBVM prioritizes remediating vulnerabilities that pose the greatest risk to an organization. While some organizations depend solely on independent scoring methodologies like CVSS or EPSS, effective RBVM takes into consideration the business criticality of assets and ties in threat intelligence to make prioritization decisions.

Prioritizing Risk Without Threat Intelligence

Let’s look at published CVEs and their CVSS scores without applying threat intelligence. Using the Nucleus Vulnerability Intelligence Platform, there are nearly 300k CVEs in the dataset. Over 43% of these are rated as high or critical; about 13% make the dreaded critical rating.

The conventional advice to focus on highs and criticals does little to reduce our workload. This approach assumes that vulnerabilities are distributed on a bell curve or have an even distribution. Neither is the case. Compare the 43% of high and critical vulnerabilities with those rated as low—just 7.3%.

And the news gets worse. Not only are high or critical vulnerabilities more numerous than those rated as medium and low, but those vulnerabilities occur more frequently in the real world as well. In some organizations, we’ve heard stories where up to 80% of vulnerabilities rate as high or critical. That simply defies logic!

Measuring A Vulnerability’s Impact Over Time

While there is room in the CVSS equation to adjust a vulnerability’s score based on threat intelligence, nobody recalculates it for everything. It’s generally a one-and-done deal, and the result measures the potential for a given vulnerability to hurt you.

But it’s like a scouting report in professional sports. Not everything lives up to its potential. For every Bo Jackson or Gregg Jefferies, ‘generational’ talent who didn’t live up to their potential, there’s a corresponding Mike Piazza, a late round throw-in draft pick who became a Hall of Famer.

What do baseball draft picks have to do with vulnerability management? CVSS scoring, like scouting reports, is imperfect. CVSS puts too much focus on vulnerabilities that never live up to their potential, like Jackson or Jefferies, while leading you to ignore an overachiever like Piazza.

And whether you’re talking infosec, baseball, or anything else in life, prioritizing potential over results is simply a mistake.

Challenges with CVSS Vulnerability Scoring

The most famous example of an overachieving vulnerability is Heartbleed. It’s easy to forget that Heartbleed only scored as a 5 under CVSS. But Heartbleed was the vulnerability of 2014, if not the decade. Heartbleed was so extreme we looked past its CVSS score and sounded the alarms when it hit the newswires.

The reason for that was simple: It broke SSL. Heartbleed exposed the hole in CVSS because CVSS treats all information disclosure the same. Since it’s no secret what kind of information a given web server might be processing, attackers could just look for servers that solicit the kind of information they wanted.

Risk-based vulnerability management takes these kinds of factors into consideration, elevating vulnerabilities like Heartbleed from medium to critical. But just as important, it refactors underachievers. Critical Java vulnerabilities come along every quarter. Organizations repeatedly fail to treat those with the same priority they treated Heartbleed and Log4J, and by and large they get away with it. Otherwise, you would expect to hear about at least one high profile breach every quarter.

The question is, how does an organization go about using data, rather than a gut feeling, to operationalize risk-based vulnerability management?

Which Vulnerabilities Should Take Priority?

A surprisingly small number of vulnerabilities end up becoming a real problem. According to our partners at Mandiant, it’s somewhere between 1-2% of vulnerabilities that end up in attacker toolboxes and therefore actually deserve the high or critical rating.

That 1% rule applies often in life. What kid didn’t grow up hoping to become an astronaut, only to discover that 1% of military recruits become pilots, and only 1% of those become astronauts. Or for athletes who discover that about 1% of high school athletes get to play professionally, and about 1% of those become Hall of Famers.

If more than one new vulnerability a year earns a critical rating, we’re having a bad year as an industry. And fewer than 10 a month on average deserve the high. That’s a manageable workload.

The Value of Risk-Based Vulnerability Management

You can’t give 110% all the time. I have seen analysts state that, in their observations, most businesses have no problem fixing 25% of their vulnerabilities, so we must tell them to fix the right ones. That’s based on the incorrect assumption that all vulnerabilities deploy at an equal success rate, which is simply not the truth. But even if everything that can hurt you ends up having solutions that are notoriously difficult to implement, when you’re talking 2%, it’s manageable.

If the idea of only fixing 2% of your vulnerabilities sounds sketchy, I have good news for you. Most years, between 20-32% of vulnerabilities fall into the medium category. So, you can raise the bar to fixing all your critical, high, and medium vulnerabilities, still fix less than half what you would under a non-risk-based approach and do substantially more to lower the probability of a breach while not wearing out your remediation teams.

Operationalize Your Vulnerability Management Program with RBVM

To transform your vulnerability management program using the Nucleus Platform, the first thing you want to do is connect your vulnerability management tools to Nucleus. Once you’ve ingested some data, navigate to Vulnerabilities and click on Active. Click the widget that says Critical Risk Rating. These are the vulnerabilities that Mandiant Threat Intelligence deems critical.

You’ll likely have one or more of these vulnerabilities in your environment. Every month, if not every week, some new vulnerability gets discovered, gets a bunch of publicity, and distracts us from whatever we were already working on. And before you have that finished, another one comes out. Don’t let the hype machine set your priorities. Log4J was a very unusual example of a vulnerability that went from being newly discovered to being your biggest problem almost immediately.

The key to having a functioning risk-based vulnerability management program, or better yet, a true threat and vulnerability management program, is not letting high publicity vulnerabilities distract you from existing vulnerabilities that pose a greater threat to your organization. Keep your focus on the items that are most likely to do your organization harm, whether that item is new several years old.

Start with those and work on getting them resolved. You’ll want to reach a point where you can fix new critical vulnerabilities very quickly after they come in, but it’s OK if you’re not there yet. You’re just getting started.

Meanwhile, deploy this month’s updates as they come out. Most new vulnerabilities won’t have high risk ratings yet, but I always found it was easier to just deploy all of them than to pick and choose. Deploying new updates will knock them out before they can become a threat and help cut into your backlog, since some of them will supersede older updates.

It’s easier said than done, but vulnerability management is a regular discipline above all else. Perform that discipline regularly, month after month, and you’ll see your risk trending downward toward an acceptable level. Once you reach that acceptable level, you’ll find maintaining it is easier than reaching it.

See Nucleus in Action

Discover how unified, risk-based automation can transform your vulnerability management.