TL;DR

This guide equips enterprise and public sector buyers with a practical evaluation framework: clear definitions, a side-by-side comparison, an objective checklist, and outcome-based metrics. It emphasizes integration depth, workflow automation, and compliance readiness, so you can select a platform that becomes the orchestration layer across tools and teams.

Strategic Overview

If you’re asking what the best exposure assessment platform is, you’re likely dealing with fragmented data, inconsistent prioritization, and stalled remediation. The right platform not only surfaces risk; it helps you reduce it in a way that holds up operationally and in front of auditors.

Exposure assessment platforms (EAPs) are built for continuous threat and exposure management (CTEM), using context-aware risk scoring and remediation orchestration to reduce real business risk, not just chase CVE lists. This guide distills what to look for, how EAPs differ from legacy vulnerability management scanners, and how to evaluate in a proof of value.

Understanding Exposure Assessment Platforms

Exposure assessment platforms aggregate, normalize, and correlate vulnerability, configuration, identity, and threat intelligence data. They drive CTEM programs with contextual, threat-aware prioritization and remediation orchestration. Unlike legacy vulnerability management tools that focus on detecting CVEs, EAPs unify data from diverse attack surfaces including scanners and cloud, endpoint, identity, and IT systems, to focus on exposures that materially elevate business risk.

According to Gartner “EAPs are designed to unify visibility across internal, external, cloud, and end-user environments, and are increasingly integrated into broader CTEM programs.“ Gartner® Magic Quadrant™ for Exposure Assessment Platforms

Key concepts in modern EAPs include:

- Alignment with CTEM stages

- Risk-based prioritization with context-aware risk scoring

- Data unification and exposure correlation across assets, security findings, and identities

Vulnerability Management vs Exposure Assessment Platforms

Most teams don’t outgrow vulnerability management because they need more findings. They outgrow it because they can’t consistently decide what to fix, who owns it, or whether it’s actually reduced risk.

| Capability | Traditional Vulnerability Management | Exposure Assessment Platform |

|---|---|---|

| Findings sources | Primarily detection (scanning) | Unifies scanners, CSPM, EDR/XDR, IAM, CMDB, ITSM, code pipelines |

| Prioritization | Severity-based (e.g., CVSS) | Context-aware (exploit intel, asset criticality, exposure signals) |

| Frequency | Periodic | Continuous |

| Outputs | Findings and lists | Explainable priorities, remediation-ready tasks |

| Actionability | Manual triage | Native remediation orchestration and SLA tracking |

| Outcomes | Volume reduction | Measurable, defensible risk reduction |

Prioritization with Context

For reference, CVSS provides a standardized severity model, but it is not context-aware on its own (see FIRST CVSS). Similarly, only 6% of published vulnerabilities are likely to be exploited in the wild, underscoring the need for exploit-aware prioritization (see FIRST EPSS). Federal guidance further emphasizes focusing on known exploited issues (see CISA KEV catalog and CISA Binding Operational Directive 22-01).

Key Features of Modern Exposure Management Platforms

Modern EAPs are not scanner extensions. They are decision-and-action systems that:

- Ingest and unify data from vulnerability scanners, CSPM, EDR/XDR, CMDB, IAM, ITSM, and DevOps tools.

- Deliver organization-aware risk scoring informed by exploit intelligence (e.g., EPSS, KEV), asset and business context (e.g. asset criticality, data sensitivity, internet exposure, etc.).

- Mobilize action by translating technical risk into who-owns-what, what-to-fix, and why-now.

- Orchestrate workflows that convert prioritized exposures into clear remediation tasks with SLA tracking.

- Reassess risk continuously as assets, identities, and environments change.

Defining Your Security Goals and Operational Requirements

Risk Reduction Objectives

For example, “cut exploitable exposure clusters by X% in Y days.”

SLA Adherence Requirements

Measure response times against requirements established across business units and asset classes.

Compliance Mandates

Satisfy POA&M reporting expectations and requirements under, for example, FedRAMP, NIST, FISMA, CMMC, etc.

Operational Ownership Model

Formalize handoffs and approvals across Security, IT, and DevOps teams.

| Risk Goal | Required Operational Capabilities |

|---|---|

| Shrink exploitable exposure clusters | Correlated exposure analysis, exploit-aware scoring (EPSS/KEV), choke-point fix identification |

| Improve SLA adherence | Native ticketing, ownership mapping, automated reminders and escalations |

| Strengthen audit defensibility | Explainable prioritization, immutable audit trails, POA&M reporting |

| Accelerate mean time to remediation (MTTR) | Workflow orchestration, change window alignment, pre-approved automations |

| Align to CTEM framework | Continuous data ingestion, ongoing reassessment, executive-ready metrics |

Integrations and Data Quality Checklist

Many exposure management initiatives fail at the data layer, long before prioritization or automation even become considerations. Use these questions to evaluate depth, fidelity, and durability of data handling:

| Topic | Questions |

|---|---|

| Data Coverage | Which security and asset data sources are ingested (scanners, EDR/XDR, CSPM, CMDB, identity)? Are there gaps that could create blind spots in exposure visibility? |

| Connector depth and data fidelity | Do connectors preserve source-specific fields, states, and logic? Do connectors interpret and maintain source-specific meaning (e.g., status, remediation state, ownership, hierarchies)? |

| Data normalization and deduplication | Can the platform prove that duplicate findings across tools resolve down to a single, trackable exposure? How are assets and identities correlated across sources? How are conflicting signals handled (e.g., one tool reports a finding as critical, while another reports it differently)? |

| Lifecycle continuity | Can the platform track the same finding across scans, tools, and time? Are state changes (open, mitigated, reopened, accepted) preserved and reconciled? |

| Data enrichment and context | How is business context added (owner, criticality, application, environment)? Are external exposure and exploit signals continuously integrated? |

Built by practitioners for practitioners, Nucleus Security’s platform continuously ingests, normalizes, and correlates data across 200+ sources into a unified, lifecycle-aware Live Data Core. It maintains a single, authoritative timeline of findings, ensuring data consistency, traceability, and real-time accuracy as new information is ingested and conditions change.

The Shift Toward EAPs

Three main forces are driving the shift toward EAPs: volume, velocity, and variety.

Volume

Enterprises manage thousands of assets across cloud, SaaS, IoT, and partners, creating millions of alerts that siloed or manual approaches cannot remediate at scale.

Velocity

New vulnerabilities, zero-days, and attack techniques appear every day, demanding continuous, real-time assessment instead of outdated point-in-time processes. The speed of exploitation after disclosure reinforces the need for continuous, automated exposure assessment instead of point-in-time snapshots.

Variety

Threats today exploit more than just patchable vulnerabilities, targeting misconfigured identities, unmanaged SaaS, compromised credentials, and other organizational security gaps. The complexity of securing the organization multiplies exponentially in hybrid and multi-cloud environments, as it introduces ephemeral assets and new attack path possibilities.

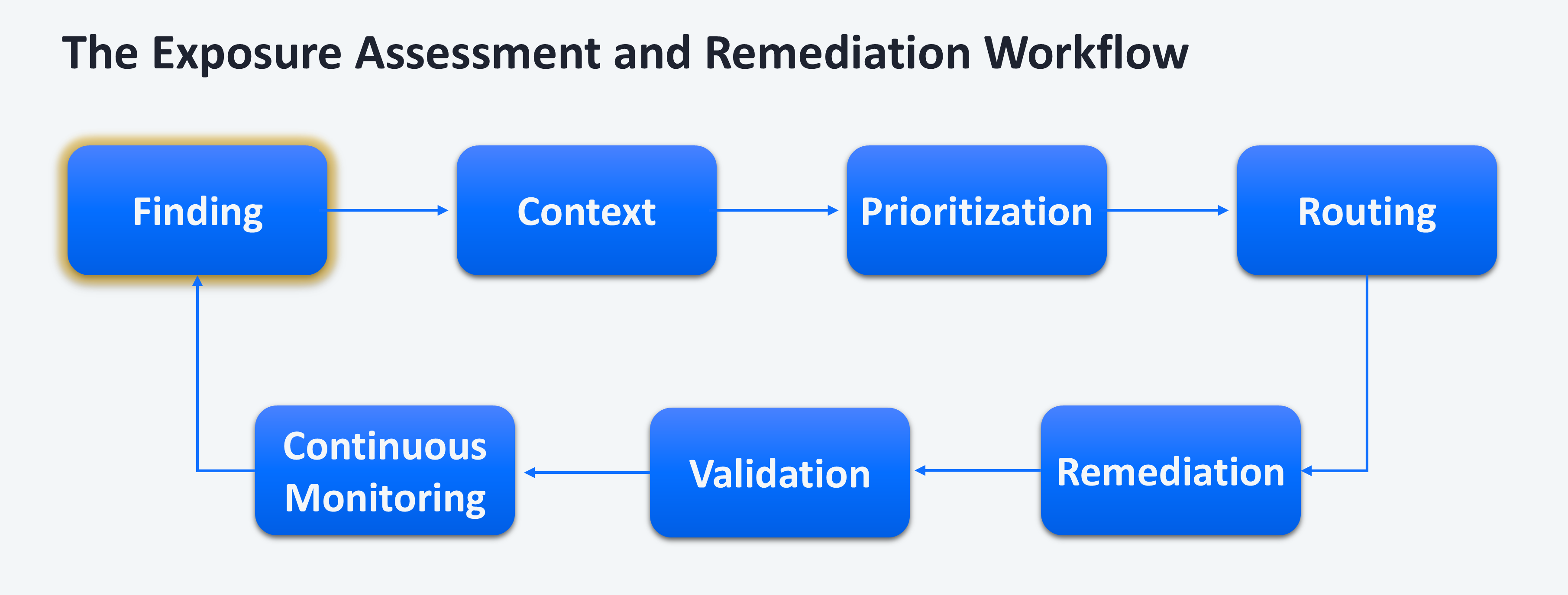

Correlated Exposure Analysis, Risk Prioritization, and Validation

Modern exposure assessment platforms combine correlation, prioritization, and validation into a continuous cycle. Each plays a distinct role in reducing risk. At enterprise scale, the challenge becomes determining which exposures actually matter and proving it.

Correlated Exposure Analysis: Creating Context

EAPs correlate vulnerabilities, misconfigurations, identity exposures, and asset context into a unified view of risk.

This shifts teams from isolated findings to connected exposure scenarios, allowing them to:

- Identify choke points where a single fix reduces multiple risks

- Understand how exposures relate to critical assets and identities

- Eliminate duplicate or fragmented findings across tools

This step depends on high-fidelity, normalized data. Without consistent asset, identity, and finding correlation over time, prioritization quickly breaks down.

Multi-Tiered Risk Prioritization

Prioritization must be layered to be effective.

Tier 1: Broad risk-based prioritization (at scale)

Applies across all exposures using:

- Exploit intelligence (EPSS, KEV, threat feeds)

- Asset context (criticality, data sensitivity, exposure)

- Environmental signals (reachability, compensating controls, ownership)

This reduces the dataset to a small, high-risk subset that makes deeper analysis operationally feasible.

Tier 2: Validation-informed refinement (selective)

Validation technologies (BAS, automated penetration testing) are applied to a subset of prioritized exposures to:

- Confirm exploitability in the real environment

- Test the effectiveness of existing controls

- Provide evidence to support remediation decisions

In practice, correlation and risk-based prioritization reduce the problem space to a manageable percentage. Validation then focuses on what remains.

Validation in the CTEM Cycle

Validation is a defined stage in CTEM. It answers a critical question: Would this exposure actually lead to a successful attack?

But validation is inherently constrained:

- Limited coverage across large environments

- Lower frequency than continuous data ingestion

- Not all exposure types can be safely or practically tested

Because of this, validation depends on prioritization to focus its efforts and feed results back to continuously improve prioritization.

Why This Model Works

Each layer solves a different problem:

- Correlation provides context across fragmented data

- Prioritization determines what matters at scale

- Validation confirms what is truly exploitable

Relying on any one in isolation creates gaps. Together, they create a system that is both scalable and defensible.

The result is better prioritization that creates repeatable, explainable risk reduction grounded in both context and evidence.

Risk Prioritization and Explainability Needs

Effective prioritization blends:

- CVSS severity, EPSS likelihood, and KEV confirmations of exploitation in the wild.

- Data sensitivity, business criticality, exposure context (internet-facing, compensating controls), and ownership.

- Time-based factors like patch availability and change windows.

Risk explainability is the transparency with which the platform shows how these inputs influenced the final ranking, so remediation teams and executive stakeholders understand why an issue is urgent and exactly what action reduces risk.

Remediation Orchestration and Workflow Automation

In most organizations, remediation breaks down at ownership, not prioritization. Ownership data is often incomplete, outdated, or inconsistent across systems. Turning insight into action is not negotiable. Look for:

- Seamless conversion of prioritized exposures into tickets with correct owners, deduplicated tasks, and consistent mapping to enterprise workflows.

- Bi-directional integration with ServiceNow, Jira, and other ticketing and ITSM platforms with built-in support for custom schemas and workflows.

- SLA tracking, POA&M processing, and exception workflows with centralized evidence collection for audit-ready reporting and performance tracking.

- Integrations with DevOps and cloud environments to embed fixing where teams work.

- Full audit trails for approvals, changes, and closures.

The Role of AI and Automation Governance

AI is not just an accelerator for exposure management workflows. When implemented correctly, it becomes a mechanism for enforcing consistency, reducing decision fatigue, and operationalizing governance at scale.

In practice, AI can support four critical areas:

#1 Data Enrichment and Normalization at Scale

AI can resolve inconsistencies across fragmented data sources by:

- Mapping assets, identities, and findings across tools with incomplete or conflicting identifiers

- Inferring missing context such as ownership, application relationships, or business criticality

- Continuously reconciling new data as environments change

This reduces the manual effort required to maintain a reliable data foundation. Manual effort is one of the most common failure points in exposure management programs.

#2 Consistent, Explainable Prioritization

AI can apply and enforce prioritization logic across large, heterogeneous datasets by:

- Combining exploit intelligence, asset context, and environmental signals into a unified risk model

- Identifying patterns in historical remediation and incident data to refine prioritization over time

- Highlighting “choke point” exposures that impact multiple risk paths

Importantly, this must remain explainable. Security and IT teams need to understand why something is prioritized to trust and act on it.

#3 Governance-aware Workflow Automation

AI enables automation that aligns with enterprise policies rather than bypassing them. This includes:

- Recommending remediation actions that are appropriate for the asset type, environment, and change controls

- Dynamically assigning ownership based on observed patterns (e.g., past fixes, system relationships)

- Enforcing SLAs, escalation paths, and exception handling based on policy

Instead of hardcoding workflows, AI allows organizations to adapt automation to real operating conditions without constant manual tuning.

#4 Continuous Validation of Decisions and Outcomes

AI can monitor how remediation actions impact risk over time by:

- Detecting when previously mitigated exposures reappear or evolve

- Identifying breakdowns in ownership, routing, or SLA adherence

- Surfacing discrepancies between expected and actual risk reduction

This creates a feedback loop where governance is not static, but continuously evaluated and improved.

Governance Still Matters

AI makes governance scalable, but it certainly doesn’t replace it. Without clear policies, ownership models, and risk tolerance definitions, AI-driven automation will amplify inconsistency rather than reduce it.

To use AI effectively, organizations still need:

- Transparency into decision logic and scoring inputs

- Human approval gates where risk tolerance requires oversight

- Immutable audit logs for all recommendations and actions

- Alignment with change management and compliance processes

Automation governance ensures that AI-driven actions remain controlled, auditable, and consistent with business risk priorities.

The practical value of AI in exposure management lies in reducing the number of decisions humans have to make while increasing consistency across those decisions. In the end, it’s not as simple as just achieving a better scoring model.

Deployment Flexibility and Speed

Prioritize platforms that deliver quick wins without limiting long term program evolution:

- Native integrations across security and IT systems with minimal configuration overhead.

- Configuration-led customization that enables policy, workflow, scoring, and ownership models to be tailored without custom engineering.

- Flexible deployment options: SaaS, GovCloud, and bring-your-own-key options for regulated environments and multi-tenancy support.

- Scalable architecture that handles high data volumes and complex organizational structures.

- Ability to expand coverage (new tools, teams, use cases) without re-architecting data pipelines or workflows.

- FedRAMP-authorized and government-ready controls.

Running an Effective Proof of Value

Design your POV to measure real impact:

- Define success criteria up front: reduction in high-risk exposure clusters, time-to-close, SLA adherence, audit readiness.

- Validate integration breadth, normalization quality, and contextual prioritization across representative business units.

- Track remediation throughput gains and ownership accuracy in ITSM.

- Include both Security and IT stakeholders, with clear handoffs and approval workflows.

- Benchmark before/after metrics and require explainable rationale for the platform’s top recommendations.

- Validate what happens when data is incomplete or inconsistent. Most platforms perform well on clean datasets, but they can degrade quickly when ownership, asset data, or scan coverage is imperfect.

Measuring Impact and Success Metrics

Define Success Criteria Tied to Outcomes, Not Features

Focus on measurable improvements like reduction in prioritized high-risk findings, time-to-close, SLA adherence, POA&M completeness, and audit readiness. Avoid vague goals like “better visibility” or “improved prioritization.”

Use Real, Messy Data

Include multiple scanners, asset sources, and business units with known duplication, ownership gaps, and conflicting signals. A clean dataset will mask normalization and correlation weaknesses.

Validate Data Integrity Before Evaluating Prioritization

Confirm deduplication accuracy, asset and identity correlation, and consistency across sources. If the data foundation is wrong, prioritization and reporting will be misleading.

Test Prioritization Under Change, Not a Point in Time

Introduce new data (e.g., new scans, intelligence) during the POV and validate that risk is re-prioritized correctly.

Check Prioritization Explainability

Require clear rationale for prioritization decisions. Don’t settle for black box prioritization.

Prove Execution in Downstream Systems

Validate ticket creation, ownership assignment, and bidirectional updates. Ensure workflows align to existing ITSM models, not simplified demos.

Track Ownership Accuracy

Measure whether issues are routed to the correct teams. Incorrect ownership or stalled tickets should be treated as failures.

Test Exception Management as a First-class Workflow

Create risk acceptances with approvals and expirations, then ingest new data and confirm decisions persist. If exceptions reappear as new findings, the model is broken.

Include Both Security and IT Stakeholders in Real Workflows

Validate handoffs, approvals, and accountability across teams. A POV that only involves security does not reflect real execution.

Validate Multi-tenancy and Tenant Isolation

For MSSP or multi-org environments, confirm strict tenant isolation, per-tenant ownership and workflows, and correct routing/reporting by tenant. Ensure no data leakage and that policies and SLAs can be applied independently per tenant.

Conclusion: Making a Confident Choice

If an exposure assessment platform can’t demonstrate consistent prioritization, accurate ownership, and measurable reduction in high-risk exposures during a proof of value, it won’t improve your program at scale.

Demand integration depth, contextual validation, and workflow automation that accelerates real remediation. Avoid surface-level visualization without operational impact. Move quickly from evaluation to proof-of-value with defined success metrics.

Engage Nucleus Security experts for your tailored PoV that demonstrates correlated exposure analysis, orchestration at scale, and compliance-ready reporting.

Frequently Asked Questions when Selecting an Exposure Assessment Platform

What differentiates exposure assessment platforms from traditional vulnerability management?

Exposure assessment platforms unify data across tools and apply environmental context to prioritize exposures that materially increase business risk, while traditional vulnerability management primarily lists and re-ranks CVEs.

How does correlated exposure analysis improve prioritization?

Correlated exposure analysis improves prioritization by combining vulnerability, identity, asset, and exploit intelligence. Correlated analysis highlights choke point remediations that reduce multiple risk vectors at once.

What integrations should a mature exposure assessment platform support?

Important exposure assessment integrations include vulnerability scanners, EDR/XDR, CSPM, CMDB, IAM, ITSM, DevOps, and code analysis tools to produce a unified and accurate risk view.

Why is explainability important?

Explainable exposure prioritization builds stakeholder trust, enables decisive action, supports executive reporting, and strengthens audit defensibility.

How can organizations ensure safe automation?

Safe automation requires transparent logic, human approval controls where needed, and comprehensive audit trails for every automated remediation action.

Gartner, Magic Quadrant for Exposure Assessment Platforms, Mitchell Schneider, Dhivya Poole, Jonathan Nunez, 10 November 2025.

Gartner and Magic Quadrant are trademarks of Gartner, Inc. and/or its affiliates.

Gartner does not endorse any company, vendor, product or service depicted in its publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner publications consist of the opinions of Gartner’s business and technology insights organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this publication, including any warranties of merchantability or fitness for a particular purpose.

See Nucleus in Action

Discover how unified, risk-based automation can transform your vulnerability management.